В математике и физике векторное пространство (также называемое линейным пространством ) — это набор , элементы которого, часто называемые векторами , могут складываться вместе и умножаться («масштабироваться») на числа, называемые скалярами . Скаляры часто являются действительными числами , но могут быть и комплексными числами или, в более общем плане, элементами любого поля . Операции сложения векторов и скалярного умножения должны удовлетворять определенным требованиям, называемым векторными аксиомами . Действительные векторные пространства и комплексные векторные пространства — это виды векторных пространств, основанные на различных видах скаляров: действительных и комплексных числах .

Векторные пространства обобщают евклидовы векторы , которые позволяют моделировать физические величины , такие как силы и скорость , которые имеют не только величину , но и направление . Концепция векторных пространств является фундаментальной для линейной алгебры вместе с концепцией матриц , которая позволяет выполнять вычисления в векторных пространствах. Это обеспечивает краткий и синтетический способ манипулирования и изучения систем линейных уравнений .

Векторные пространства характеризуются своей размерностью , которая, грубо говоря, задает количество независимых направлений в пространстве. Это означает, что для двух векторных пространств над данным полем и одинаковой размерности свойства, которые зависят только от структуры векторного пространства, совершенно одинаковы (технически векторные пространства изоморфны ) . Векторное пространство является конечномерным, если его размерность является натуральным числом . В противном случае оно бесконечномерно , а его размерность — бесконечный кардинал . Конечномерные векторные пространства естественным образом встречаются в геометрии и смежных областях. Бесконечномерные векторные пространства встречаются во многих областях математики. Например, кольца полиномов представляют собой счетно -бесконечномерные векторные пространства, а многие функциональные пространства имеют мощность континуума в качестве размерности.

Многие векторные пространства, рассматриваемые в математике, наделены и другими структурами . Это случай алгебр , которые включают расширения полей , кольца многочленов, ассоциативные алгебры и алгебры Ли . Это также относится к топологическим векторным пространствам , которые включают функциональные пространства, пространства внутреннего произведения , нормированные пространства , гильбертовы пространства и банаховы пространства .

В этой статье векторы выделены жирным шрифтом, чтобы отличить их от скаляров. [номер 1] [1]

Векторное пространство над полем F — это непустое множество V вместе с бинарной операцией и бинарной функцией , которые удовлетворяют восьми аксиомам, перечисленным ниже. В этом контексте элементы V обычно называются векторами , а элементы F называются скалярами . [2]

Чтобы иметь векторное пространство, восемь следующих аксиом должны выполняться для всех u , v и w в V , а также a и b в F. [3]

Когда скалярное поле представляет собой действительные числа , векторное пространство называется действительным векторным пространством , а когда скалярное поле представляет собой комплексные числа , векторное пространство называется комплексным векторным пространством . [4] Эти два случая являются наиболее распространенными, но также часто рассматриваются векторные пространства со скалярами в произвольном поле F. Такое векторное пространство называется F - векторным пространством или векторным пространством над F. [5]

Можно дать эквивалентное определение векторного пространства, которое гораздо более краткое, но менее элементарное: первые четыре аксиомы (относящиеся к сложению векторов) говорят, что векторное пространство представляет собой абелеву группу при сложении, а четыре оставшиеся аксиомы (относящиеся к сложению векторов ) скалярное умножение) говорят, что эта операция определяет гомоморфизм колец из поля F в кольцо эндоморфизмов этой группы. [6]

Вычитание двух векторов можно определить как

Прямые следствия аксиом заключаются в том, что, поскольку каждый имеет

Если говорить еще более кратко, векторное пространство — это модуль над полем . [7]

Базисы — фундаментальный инструмент для изучения векторных пространств, особенно когда размерность конечна. В бесконечномерном случае существование бесконечных базисов, часто называемых базисами Гамеля , зависит от выбранной аксиомы . Отсюда следует, что, вообще говоря, ни одна база не может быть описана явно. [16] Например, действительные числа образуют бесконечномерное векторное пространство над рациональными числами , для которого неизвестен конкретный базис.

Рассмотрим базис векторного пространства V размерности n над полем F . Определение базиса подразумевает, что каждый может быть записан с в F и что это разложение уникально. Скаляры называются координатами v на базисе . Их также называют коэффициентами разложения v по базису. Также говорят, что n - кортеж координат является координатным вектором v на базисе, поскольку набор n - кортежей элементов F представляет собой векторное пространство для покомпонентного сложения и скалярного умножения, размерность которого равна n .

Соответствие «один к одному» между векторами и их координатными векторами отображает сложение векторов в сложение векторов, а скалярное умножение — в скалярное умножение. Таким образом, это изоморфизм векторного пространства , который позволяет переводить рассуждения и вычисления над векторами в рассуждения и вычисления над их координатами. [17]

Векторные пространства происходят из аффинной геометрии путем введения координат в плоском или трехмерном пространстве. Около 1636 года французские математики Рене Декарт и Пьер де Ферма основали аналитическую геометрию , находя решения уравнения двух переменных с точками на плоской кривой . [18] Для достижения геометрических решений без использования координат Больцано ввел в 1804 году определенные операции над точками, линиями и плоскостями, которые являются предшественниками векторов. [19] Мёбиус (1827) ввёл понятие барицентрических координат . [20] Беллавитис (1833) ввел отношение эквивалентности на направленных отрезках прямой, которые имеют одинаковую длину и направление, которое он назвал равновесием . [21] Тогда евклидов вектор является классом эквивалентности этого отношения. [22]

Векторы были пересмотрены с представлением комплексных чисел Арганом и Гамильтоном и появлением последним кватернионов . [23] Они являются элементами R 2 и R 4 ; обработка их с помощью линейных комбинаций восходит к Лагерру в 1867 году, который также определил системы линейных уравнений .

В 1857 году Кэли ввёл матричную запись , позволяющую унифицировать и упростить линейные карты . Примерно в то же время Грассман изучал барицентрическое исчисление, начатое Мёбиусом. Он представлял себе наборы абстрактных объектов, наделенных операциями. [24] В его работах присутствуют понятия линейной независимости и размерности , а также скалярных произведений . Работа Грассмана 1844 года также выходит за рамки векторных пространств, поскольку рассмотрение умножения привело его к тому, что сегодня называется алгебрами . Итальянский математик Пеано был первым, кто дал современное определение векторных пространств и линейных отображений в 1888 году [25] , хотя он называл их «линейными системами». [26] Аксиоматизация Пеано допускала векторные пространства с бесконечной размерностью, но Пеано не развивал эту теорию дальше. В 1897 году Сальваторе Пинчерле принял аксиомы Пеано и сделал первые шаги в теории бесконечномерных векторных пространств. [27]

Важным развитием векторных пространств стало создание функциональных пространств Анри Лебегом . Позднее это было формализовано Банахом и Гильбертом примерно в 1920 году. [28] В то время алгебра и новая область функционального анализа начали взаимодействовать, особенно с такими ключевыми понятиями, как пространства p -интегрируемых функций и гильбертовы пространства . [29]

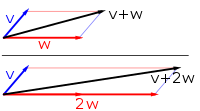

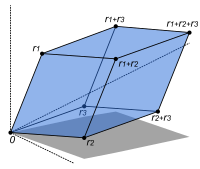

Первый пример векторного пространства состоит из стрелок в фиксированной плоскости , начинающихся в одной фиксированной точке. Это используется в физике для описания сил или скоростей . [30] Учитывая любые две такие стрелки, v и w , параллелограмм , охватываемый этими двумя стрелками, содержит одну диагональную стрелку, которая также начинается в начале координат. Эта новая стрелка называется суммой двух стрелок и обозначается v + w . В частном случае двух стрелок на одной линии их суммой является стрелка на этой линии, длина которой равна сумме или разности длин в зависимости от того, имеют ли стрелки одинаковое направление. Другая операция, которую можно выполнить со стрелками, — это масштабирование: для любого положительного действительного числа a стрелка, которая имеет то же направление, что и v , но расширяется или сжимается в результате умножения ее длины на a , называется умножением v на a . Он обозначается v . Когда a отрицательное значение, v определяется как стрелка, указывающая в противоположном направлении . [31]

Ниже показано несколько примеров: если a = 2 , результирующий вектор a w имеет то же направление, что и w , но растягивается до двойной длины w (второе изображение). Эквивалентно, 2 w — это сумма w + w . Более того, (−1) v = − v имеет противоположное направление и ту же длину, что и v (синий вектор, направленный вниз на втором изображении).

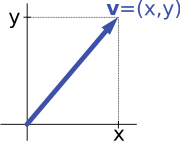

Второй ключевой пример векторного пространства — пары действительных чисел x и y . Порядок компонентов x и y имеет значение, поэтому такую пару еще называют упорядоченной парой . Такая пара записывается как ( x , y ) . Сумма двух таких пар и умножение пары на число определяется следующим образом: [32]

Первый пример выше сводится к этому примеру, если стрелка представлена парой декартовых координат ее конечной точки.

The simplest example of a vector space over a field F is the field F itself with its addition viewed as vector addition and its multiplication viewed as scalar multiplication. More generally, all n-tuples (sequences of length n) of elements ai of F form a vector space that is usually denoted Fn and called a coordinate space.[33] The case n = 1 is the above-mentioned simplest example, in which the field F is also regarded as a vector space over itself. The case F = R and n = 2 (so R2) reduces to the previous example.

The set of complex numbers C, numbers that can be written in the form x + iy for real numbers x and y where i is the imaginary unit, form a vector space over the reals with the usual addition and multiplication: (x + iy) + (a + ib) = (x + a) + i(y + b) and c ⋅ (x + iy) = (c ⋅ x) + i(c ⋅ y) for real numbers x, y, a, b and c. The various axioms of a vector space follow from the fact that the same rules hold for complex number arithmetic. The example of complex numbers is essentially the same as (that is, it is isomorphic to) the vector space of ordered pairs of real numbers mentioned above: if we think of the complex number x + i y as representing the ordered pair (x, y) in the complex plane then we see that the rules for addition and scalar multiplication correspond exactly to those in the earlier example.

More generally, field extensions provide another class of examples of vector spaces, particularly in algebra and algebraic number theory: a field F containing a smaller field E is an E-vector space, by the given multiplication and addition operations of F.[34] For example, the complex numbers are a vector space over R, and the field extension is a vector space over Q.

Functions from any fixed set Ω to a field F also form vector spaces, by performing addition and scalar multiplication pointwise. That is, the sum of two functions f and g is the function given byand similarly for multiplication. Such function spaces occur in many geometric situations, when Ω is the real line or an interval, or other subsets of R. Many notions in topology and analysis, such as continuity, integrability or differentiability are well-behaved with respect to linearity: sums and scalar multiples of functions possessing such a property still have that property.[35] Therefore, the set of such functions are vector spaces, whose study belongs to functional analysis.

Systems of homogeneous linear equations are closely tied to vector spaces.[36] For example, the solutions of are given by triples with arbitrary and They form a vector space: sums and scalar multiples of such triples still satisfy the same ratios of the three variables; thus they are solutions, too. Matrices can be used to condense multiple linear equations as above into one vector equation, namely

where is the matrix containing the coefficients of the given equations, is the vector denotes the matrix product, and is the zero vector. In a similar vein, the solutions of homogeneous linear differential equations form vector spaces. For example,

yields where and are arbitrary constants, and is the natural exponential function.

The relation of two vector spaces can be expressed by linear map or linear transformation. They are functions that reflect the vector space structure, that is, they preserve sums and scalar multiplication: for all and in all in [37]

An isomorphism is a linear map f : V → W such that there exists an inverse map g : W → V, which is a map such that the two possible compositions f ∘ g : W → W and g ∘ f : V → V are identity maps. Equivalently, f is both one-to-one (injective) and onto (surjective).[38] If there exists an isomorphism between V and W, the two spaces are said to be isomorphic; they are then essentially identical as vector spaces, since all identities holding in V are, via f, transported to similar ones in W, and vice versa via g.

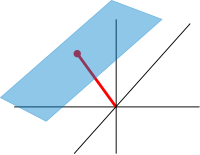

For example, the arrows in the plane and the ordered pairs of numbers vector spaces in the introduction above (see § Examples) are isomorphic: a planar arrow v departing at the origin of some (fixed) coordinate system can be expressed as an ordered pair by considering the x- and y-component of the arrow, as shown in the image at the right. Conversely, given a pair (x, y), the arrow going by x to the right (or to the left, if x is negative), and y up (down, if y is negative) turns back the arrow v.[39]

Linear maps V → W between two vector spaces form a vector space HomF(V, W), also denoted L(V, W), or 𝓛(V, W).[40] The space of linear maps from V to F is called the dual vector space, denoted V∗.[41] Via the injective natural map V → V∗∗, any vector space can be embedded into its bidual; the map is an isomorphism if and only if the space is finite-dimensional.[42]

Once a basis of V is chosen, linear maps f : V → W are completely determined by specifying the images of the basis vectors, because any element of V is expressed uniquely as a linear combination of them.[43] If dim V = dim W, a 1-to-1 correspondence between fixed bases of V and W gives rise to a linear map that maps any basis element of V to the corresponding basis element of W. It is an isomorphism, by its very definition.[44] Therefore, two vector spaces over a given field are isomorphic if their dimensions agree and vice versa. Another way to express this is that any vector space over a given field is completely classified (up to isomorphism) by its dimension, a single number. In particular, any n-dimensional F-vector space V is isomorphic to Fn. However, there is no "canonical" or preferred isomorphism; an isomorphism φ : Fn → V is equivalent to the choice of a basis of V, by mapping the standard basis of Fn to V, via φ.

Matrices are a useful notion to encode linear maps.[45] They are written as a rectangular array of scalars as in the image at the right. Any m-by-n matrix gives rise to a linear map from Fn to Fm, by the following where denotes summation, or by using the matrix multiplication of the matrix with the coordinate vector :

Moreover, after choosing bases of V and W, any linear map f : V → W is uniquely represented by a matrix via this assignment.[46]

The determinant det (A) of a square matrix A is a scalar that tells whether the associated map is an isomorphism or not: to be so it is sufficient and necessary that the determinant is nonzero.[47] The linear transformation of Rn corresponding to a real n-by-n matrix is orientation preserving if and only if its determinant is positive.

Endomorphisms, linear maps f : V → V, are particularly important since in this case vectors v can be compared with their image under f, f(v). Any nonzero vector v satisfying λv = f(v), where λ is a scalar, is called an eigenvector of f with eigenvalue λ.[48] Equivalently, v is an element of the kernel of the difference f − λ · Id (where Id is the identity map V → V). If V is finite-dimensional, this can be rephrased using determinants: f having eigenvalue λ is equivalent toBy spelling out the definition of the determinant, the expression on the left hand side can be seen to be a polynomial function in λ, called the characteristic polynomial of f.[49] If the field F is large enough to contain a zero of this polynomial (which automatically happens for F algebraically closed, such as F = C) any linear map has at least one eigenvector. The vector space V may or may not possess an eigenbasis, a basis consisting of eigenvectors. This phenomenon is governed by the Jordan canonical form of the map.[50] The set of all eigenvectors corresponding to a particular eigenvalue of f forms a vector space known as the eigenspace corresponding to the eigenvalue (and f) in question.

In addition to the above concrete examples, there are a number of standard linear algebraic constructions that yield vector spaces related to given ones.

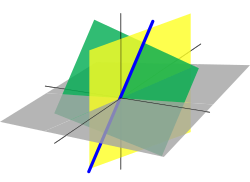

A nonempty subset of a vector space that is closed under addition and scalar multiplication (and therefore contains the -vector of ) is called a linear subspace of , or simply a subspace of , when the ambient space is unambiguously a vector space.[51][nb 4] Subspaces of are vector spaces (over the same field) in their own right. The intersection of all subspaces containing a given set of vectors is called its span, and it is the smallest subspace of containing the set . Expressed in terms of elements, the span is the subspace consisting of all the linear combinations of elements of .[52]

Linear subspace of dimension 1 and 2 are referred to as a line (also vector line), and a plane respectively. If W is an n-dimensional vector space, any subspace of dimension 1 less, i.e., of dimension is called a hyperplane.[53]

The counterpart to subspaces are quotient vector spaces.[54] Given any subspace , the quotient space (" modulo ") is defined as follows: as a set, it consists ofwhere is an arbitrary vector in . The sum of two such elements and is , and scalar multiplication is given by . The key point in this definition is that if and only if the difference of and lies in .[nb 5] This way, the quotient space "forgets" information that is contained in the subspace .

The kernel of a linear map consists of vectors that are mapped to in .[55] The kernel and the image are subspaces of and , respectively.[56]

An important example is the kernel of a linear map for some fixed matrix . The kernel of this map is the subspace of vectors such that , which is precisely the set of solutions to the system of homogeneous linear equations belonging to . This concept also extends to linear differential equationswhere the coefficients are functions in too. In the corresponding mapthe derivatives of the function appear linearly (as opposed to , for example). Since differentiation is a linear procedure (that is, and for a constant ) this assignment is linear, called a linear differential operator. In particular, the solutions to the differential equation form a vector space (over R or C).[57]

The existence of kernels and images is part of the statement that the category of vector spaces (over a fixed field ) is an abelian category, that is, a corpus of mathematical objects and structure-preserving maps between them (a category) that behaves much like the category of abelian groups.[58] Because of this, many statements such as the first isomorphism theorem (also called rank–nullity theorem in matrix-related terms)and the second and third isomorphism theorem can be formulated and proven in a way very similar to the corresponding statements for groups.

The direct product of vector spaces and the direct sum of vector spaces are two ways of combining an indexed family of vector spaces into a new vector space.

The direct product of a family of vector spaces consists of the set of all tuples , which specify for each index in some index set an element of .[59] Addition and scalar multiplication is performed componentwise. A variant of this construction is the direct sum (also called coproduct and denoted ), where only tuples with finitely many nonzero vectors are allowed. If the index set is finite, the two constructions agree, but in general they are different.

The tensor product or simply of two vector spaces and is one of the central notions of multilinear algebra which deals with extending notions such as linear maps to several variables. A map from the Cartesian product is called bilinear if is linear in both variables and That is to say, for fixed the map is linear in the sense above and likewise for fixed

The tensor product is a particular vector space that is a universal recipient of bilinear maps as follows. It is defined as the vector space consisting of finite (formal) sums of symbols called tensorssubject to the rules[60]These rules ensure that the map from the to that maps a tuple to is bilinear. The universality states that given any vector space and any bilinear map there exists a unique map shown in the diagram with a dotted arrow, whose composition with equals [61] This is called the universal property of the tensor product, an instance of the method—much used in advanced abstract algebra—to indirectly define objects by specifying maps from or to this object.

From the point of view of linear algebra, vector spaces are completely understood insofar as any vector space over a given field is characterized, up to isomorphism, by its dimension. However, vector spaces per se do not offer a framework to deal with the question—crucial to analysis—whether a sequence of functions converges to another function. Likewise, linear algebra is not adapted to deal with infinite series, since the addition operation allows only finitely many terms to be added. Therefore, the needs of functional analysis require considering additional structures.[62]

A vector space may be given a partial order under which some vectors can be compared.[63] For example, -dimensional real space can be ordered by comparing its vectors componentwise. Ordered vector spaces, for example Riesz spaces, are fundamental to Lebesgue integration, which relies on the ability to express a function as a difference of two positive functionswhere denotes the positive part of and the negative part.[64]

"Measuring" vectors is done by specifying a norm, a datum which measures lengths of vectors, or by an inner product, which measures angles between vectors. Norms and inner products are denoted and respectively. The datum of an inner product entails that lengths of vectors can be defined too, by defining the associated norm Vector spaces endowed with such data are known as normed vector spaces and inner product spaces, respectively.[65]

Coordinate space can be equipped with the standard dot product:In this reflects the common notion of the angle between two vectors and by the law of cosines:Because of this, two vectors satisfying are called orthogonal. An important variant of the standard dot product is used in Minkowski space: endowed with the Lorentz product[66]In contrast to the standard dot product, it is not positive definite: also takes negative values, for example, for Singling out the fourth coordinate—corresponding to time, as opposed to three space-dimensions—makes it useful for the mathematical treatment of special relativity.

Convergence questions are treated by considering vector spaces carrying a compatible topology, a structure that allows one to talk about elements being close to each other.[67] Compatible here means that addition and scalar multiplication have to be continuous maps. Roughly, if and in , and in vary by a bounded amount, then so do and [nb 6] To make sense of specifying the amount a scalar changes, the field also has to carry a topology in this context; a common choice is the reals or the complex numbers.

In such topological vector spaces one can consider series of vectors. The infinite sumdenotes the limit of the corresponding finite partial sums of the sequence of elements of For example, the could be (real or complex) functions belonging to some function space in which case the series is a function series. The mode of convergence of the series depends on the topology imposed on the function space. In such cases, pointwise convergence and uniform convergence are two prominent examples.[68]

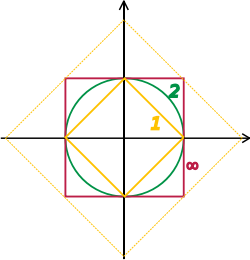

A way to ensure the existence of limits of certain infinite series is to restrict attention to spaces where any Cauchy sequence has a limit; such a vector space is called complete. Roughly, a vector space is complete provided that it contains all necessary limits. For example, the vector space of polynomials on the unit interval equipped with the topology of uniform convergence is not complete because any continuous function on can be uniformly approximated by a sequence of polynomials, by the Weierstrass approximation theorem.[69] In contrast, the space of all continuous functions on with the same topology is complete.[70] A norm gives rise to a topology by defining that a sequence of vectors converges to if and only ifBanach and Hilbert spaces are complete topological vector spaces whose topologies are given, respectively, by a norm and an inner product. Their study—a key piece of functional analysis—focuses on infinite-dimensional vector spaces, since all norms on finite-dimensional topological vector spaces give rise to the same notion of convergence.[71] The image at the right shows the equivalence of the -norm and -norm on as the unit "balls" enclose each other, a sequence converges to zero in one norm if and only if it so does in the other norm. In the infinite-dimensional case, however, there will generally be inequivalent topologies, which makes the study of topological vector spaces richer than that of vector spaces without additional data.

From a conceptual point of view, all notions related to topological vector spaces should match the topology. For example, instead of considering all linear maps (also called functionals) maps between topological vector spaces are required to be continuous.[72] In particular, the (topological) dual space consists of continuous functionals (or to ). The fundamental Hahn–Banach theorem is concerned with separating subspaces of appropriate topological vector spaces by continuous functionals.[73]

Banach spaces, introduced by Stefan Banach, are complete normed vector spaces.[74]

A first example is the vector space consisting of infinite vectors with real entries whose -norm given by

The topologies on the infinite-dimensional space are inequivalent for different For example, the sequence of vectors in which the first components are and the following ones are converges to the zero vector for but does not for but

More generally than sequences of real numbers, functions are endowed with a norm that replaces the above sum by the Lebesgue integral

The space of integrable functions on a given domain (for example an interval) satisfying and equipped with this norm are called Lebesgue spaces, denoted [nb 7]

These spaces are complete.[75] (If one uses the Riemann integral instead, the space is not complete, which may be seen as a justification for Lebesgue's integration theory.[nb 8]) Concretely this means that for any sequence of Lebesgue-integrable functions with satisfying the conditionthere exists a function belonging to the vector space such that

Imposing boundedness conditions not only on the function, but also on its derivatives leads to Sobolev spaces.[76]

Complete inner product spaces are known as Hilbert spaces, in honor of David Hilbert.[77] The Hilbert space with inner product given bywhere denotes the complex conjugate of [78][nb 9] is a key case.

By definition, in a Hilbert space, any Cauchy sequence converges to a limit. Conversely, finding a sequence of functions with desirable properties that approximate a given limit function is equally crucial. Early analysis, in the guise of the Taylor approximation, established an approximation of differentiable functions by polynomials.[79] By the Stone–Weierstrass theorem, every continuous function on can be approximated as closely as desired by a polynomial.[80] A similar approximation technique by trigonometric functions is commonly called Fourier expansion, and is much applied in engineering. More generally, and more conceptually, the theorem yields a simple description of what "basic functions", or, in abstract Hilbert spaces, what basic vectors suffice to generate a Hilbert space in the sense that the closure of their span (that is, finite linear combinations and limits of those) is the whole space. Such a set of functions is called a basis of its cardinality is known as the Hilbert space dimension.[nb 10] Not only does the theorem exhibit suitable basis functions as sufficient for approximation purposes, but also together with the Gram–Schmidt process, it enables one to construct a basis of orthogonal vectors.[81] Such orthogonal bases are the Hilbert space generalization of the coordinate axes in finite-dimensional Euclidean space.

The solutions to various differential equations can be interpreted in terms of Hilbert spaces. For example, a great many fields in physics and engineering lead to such equations, and frequently solutions with particular physical properties are used as basis functions, often orthogonal.[82] As an example from physics, the time-dependent Schrödinger equation in quantum mechanics describes the change of physical properties in time by means of a partial differential equation, whose solutions are called wavefunctions.[83] Definite values for physical properties such as energy, or momentum, correspond to eigenvalues of a certain (linear) differential operator and the associated wavefunctions are called eigenstates. The spectral theorem decomposes a linear compact operator acting on functions in terms of these eigenfunctions and their eigenvalues.[84]

General vector spaces do not possess a multiplication between vectors. A vector space equipped with an additional bilinear operator defining the multiplication of two vectors is an algebra over a field (or F-algebra if the field F is specified).[85]

For example, the set of all polynomials forms an algebra known as the polynomial ring: using that the sum of two polynomials is a polynomial, they form a vector space; they form an algebra since the product of two polynomials is again a polynomial. Rings of polynomials (in several variables) and their quotients form the basis of algebraic geometry, because they are rings of functions of algebraic geometric objects.[86]

Another crucial example are Lie algebras, which are neither commutative nor associative, but the failure to be so is limited by the constraints ( denotes the product of and ):

Examples include the vector space of -by- matrices, with the commutator of two matrices, and endowed with the cross product.

The tensor algebra is a formal way of adding products to any vector space to obtain an algebra.[88] As a vector space, it is spanned by symbols, called simple tensorswhere the degree varies. The multiplication is given by concatenating such symbols, imposing the distributive law under addition, and requiring that scalar multiplication commute with the tensor product ⊗, much the same way as with the tensor product of two vector spaces introduced in the above section on tensor products. In general, there are no relations between and Forcing two such elements to be equal leads to the symmetric algebra, whereas forcing yields the exterior algebra.[89]

A vector bundle is a family of vector spaces parametrized continuously by a topological space X.[90] More precisely, a vector bundle over X is a topological space E equipped with a continuous map such that for every x in X, the fiber π−1(x) is a vector space. The case dim V = 1 is called a line bundle. For any vector space V, the projection X × V → X makes the product X × V into a "trivial" vector bundle. Vector bundles over X are required to be locally a product of X and some (fixed) vector space V: for every x in X, there is a neighborhood U of x such that the restriction of π to π−1(U) is isomorphic[nb 11] to the trivial bundle U × V → U. Despite their locally trivial character, vector bundles may (depending on the shape of the underlying space X) be "twisted" in the large (that is, the bundle need not be (globally isomorphic to) the trivial bundle X × V). For example, the Möbius strip can be seen as a line bundle over the circle S1 (by identifying open intervals with the real line). It is, however, different from the cylinder S1 × R, because the latter is orientable whereas the former is not.[91]

Properties of certain vector bundles provide information about the underlying topological space. For example, the tangent bundle consists of the collection of tangent spaces parametrized by the points of a differentiable manifold. The tangent bundle of the circle S1 is globally isomorphic to S1 × R, since there is a global nonzero vector field on S1.[nb 12] In contrast, by the hairy ball theorem, there is no (tangent) vector field on the 2-sphere S2 which is everywhere nonzero.[92] K-theory studies the isomorphism classes of all vector bundles over some topological space.[93] In addition to deepening topological and geometrical insight, it has purely algebraic consequences, such as the classification of finite-dimensional real division algebras: R, C, the quaternions H and the octonions O.

The cotangent bundle of a differentiable manifold consists, at every point of the manifold, of the dual of the tangent space, the cotangent space. Sections of that bundle are known as differential one-forms.

Modules are to rings what vector spaces are to fields: the same axioms, applied to a ring R instead of a field F, yield modules.[94] The theory of modules, compared to that of vector spaces, is complicated by the presence of ring elements that do not have multiplicative inverses. For example, modules need not have bases, as the Z-module (that is, abelian group) Z/2Z shows; those modules that do (including all vector spaces) are known as free modules. Nevertheless, a vector space can be compactly defined as a module over a ring which is a field, with the elements being called vectors. Some authors use the term vector space to mean modules over a division ring.[95] The algebro-geometric interpretation of commutative rings via their spectrum allows the development of concepts such as locally free modules, the algebraic counterpart to vector bundles.

Roughly, affine spaces are vector spaces whose origins are not specified.[96] More precisely, an affine space is a set with a free transitive vector space action. In particular, a vector space is an affine space over itself, by the mapIf W is a vector space, then an affine subspace is a subset of W obtained by translating a linear subspace V by a fixed vector x ∈ W; this space is denoted by x + V (it is a coset of V in W) and consists of all vectors of the form x + v for v ∈ V. An important example is the space of solutions of a system of inhomogeneous linear equationsgeneralizing the homogeneous case discussed in the above section on linear equations, which can be found by setting in this equation.[97] The space of solutions is the affine subspace x + V where x is a particular solution of the equation, and V is the space of solutions of the homogeneous equation (the nullspace of A).

The set of one-dimensional subspaces of a fixed finite-dimensional vector space V is known as projective space; it may be used to formalize the idea of parallel lines intersecting at infinity.[98] Grassmannians and flag manifolds generalize this by parametrizing linear subspaces of fixed dimension k and flags of subspaces, respectively.

{{citation}}: CS1 maint: location missing publisher (link)